One item of feedback I received on my initial P2P database post was that it was missing practical examples to engage the layperson. In this post, I’ll try to rectify that, using a couple of the examples I mentioned before. Incidentally, I’ve named it: the project is now called Meshing, hopefully for fairly obvious reasons.

Careers recruitment

James works for a global jobs aggregator web site, GreatJob.com, in their operations department. His role is to negotiate with recruitment companies of various sizes to supply recruitment ads for their various web properties (UK technology, US engineering, NZ finance etc). In some cases GreatJob.com becomes a recruitment intermediary, and so receives a finders fee for every successful placement; in a second category, agencies pay an ongoing rate to have their vacancies featured; and the GreatJob websites generate additional income from on-site advertising.

However, all is not well in the GreatJobs camp. James knows from feedback that vacancies are often duplicated, so users who get a “You have 1 new matching job” message are used to discovering that the “new” job is just a copy of one they’ve already seen. Partly this is due to data being entered by technophobic recruitment consultants (computer frozen? Click “Send” a third time, that might help!), and partly some roles are being advertised by more than one agency. At least the first source of duplicates could be weeded out by GreatJobs, but the business isn’t good at responding to technology issues quickly enough.

Furthermore, often adverts are mis-categorised by location, and the match system works only by employing a basic keyword search, and so results are generally poor. The end result is users receive lots of job alerts, but most of them are unsuitable. This slows the recruitment process down, resulting in unhappy users. While GreatJobs.com is a viable business, James knows it could be much better, and so quits to found his own web-based recruitment start-up.

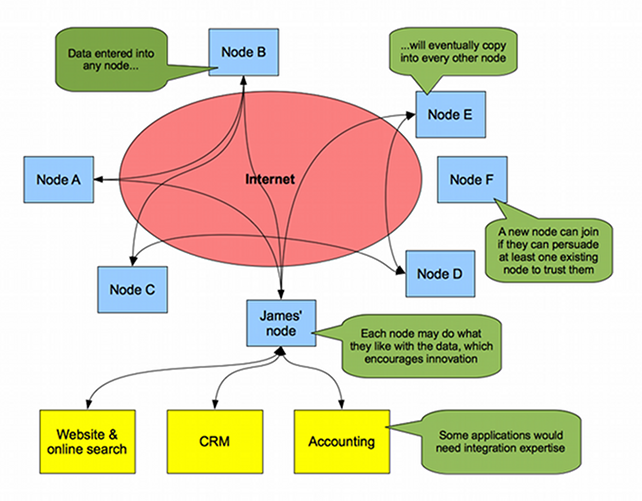

He is well aware that he can’t compete with the size of his previous employer by himself, so decides on a gamble. Where he strikes a deal with a recruitment company, position data will be shared both ways: the recruitment company get their advert in the database, and they also get an ongoing copy of the live database. To achieve this, James sets up a Meshing node, and develops a website encouraging small recruitment consultants around the world to do the same. Each of them sets up a “trust relationship” in their Meshing node with other consultant firms, each having their own node; where such a relationship exists, updates flow in both directions. Since they all have to trust at least one node to join, all nodes eventually get all changes. Schematically, it looks a bit like this:

The arrangement does mean that all consultants involved lose control of their data, but the payoff is that they all get a good chance of competing with larger players. Furthermore, although Meshing does not enforce contribution minimums, it is likely that any node not making many useful changes would soon lose its trust relationships, thus keeping the amount of free-riding low. An interesting business model might grow around this arrangement, such as: the agency website that attracts a job-seeker receives 50% of the fee, with the remainder going to the agency that found the opportunity.

Now that each site is operating on a more level playing-field, they can differentiate themselves on their candidate lists, or – as James intends – on their search quality. So, he hires a search engineer (using a big chunk of his VC fund!) and starts to challenge the marketplace with a significantly better product than the old, closed business model was able to bring about.

Addendum: would recruitment agencies try this? Short answer – I have no idea. But I think business models that rely on keeping data secret are outdated in the information age, and people need to experiment to improve upon the old ones. My thinking is that having lots of small, agile teams competing using the same dataset will foster innovative ways to present, search and de-duplicate the data.

No agencies recruitment

Gemma is an experienced programmer, and prefers to move to a new employer every two years to keep her skills sharp. However she finds the process onerous, since she likes to deal with prospective employers directly, but the market is largely cornered by agencies – and finding high-quality sources of direct opportunities is not trivial. She decides set up her own website as an experiment, for anyone to advertise “no agency” roles free of charge.

To start with, Gemma sets up an automated process to populate her database with jobs from GeekUp (plus other similar sites with an RSS feed). She also sets up a simple form that lets any prospective employer add roles as well. Now here’s the clever bit: she integrates it with Meshing. She is soon approached by a couple of other developers who like her idea, and ask to mirror her dataset. She readily adds trust relationships to their Meshing nodes (it’s just an experiment, so she doesn’t care about freeloaders). Soon, other developers are also contributing jobs into the system, and before too long they have thousands, changing the nature of the marketplace.

This is a different proposition to James’ model, but he may have to watch out: agencies would increasingly need to prove that they can add tangible value to the recruitment process, otherwise they wouldn’t survive.

Addendum: will this work? Short answer – definitely! No-agency roles presently have to fight for space in a search-engine blizzard of duplicate/stale agency ads, and direct advertisers use a mix of social media, blogs, local job sites, offline ads etc. that don’t have a particularly long reach. If lots of small, well-engineered sites can offer the same dataset in various ways, employers will be more likely to advertise directly in the first instance.

Public transport routes

Mo owns a search and mapping company, and is interested in developing online tools to let users search for public transportation routes from one location to another. He is aware that large companies such as Google offer this facility, but a good number of routes are often missing or out of date. In any case, Mo doesn’t know think the big corporate players should be left to dominate the market! He is planning to offer integrated search systems to transport operators looking to encourage take-up of their travel services (a train company can offer recent data on which buses to take to an onward destination, based on their train’s usual arrival time). He is also considering seeking funding from municipal councils and tourism bodies, or anyone interested in increasing take-up of public transport.

For every train/bus/coach/tube route, GPS co-ordinates should be stored for every stop, and the planned begin/end time of each route segment. The table format should be able to cater for exceptions, such as: “this service runs as stated except if it is Christmas day” or “this service doesn’t run on Bank Holidays” etc. Nodes may choose to accept updates from a public web form, but it would make sense for a moderator to double-check them (perhaps against the static timetables that some operators publish on their websites).

Addendum: would this work? Short answer – maybe, if enough transport operators are amenable. The biggest potential barrier is if an operator decides that their schedules are copyright (I think they might be within their rights to do so, in the UK at least). The only way transport data can be useful is if it is made available for any purpose, free of charge. The legalistic record of train operators (regarding the repurposing of their own data feeds) however doesn’t bode well. Of course, if all bus/train companies offered decent XML feeds without any restrictive licensing, a separate solution would not be necessary!

[…] Yes. My introduction has a number of bullet-pointed suggestions, and a couple of these are explored in more detail in the worked examples. […]